Machine learning is a helpful forecasting tool, but challenges remain

Hopes have risen in recent years over the potential contribution of machine learning in economic forecasting. But the pandemic has posed a major challenge, with well-publicised hiccups with the algorithms used in marketing, inventory management and a range of other fields. How has the field of macroeconomics fared?

Prior to the pandemic, the evidence suggested that machine-learning solutions are well-suited to short-term macroeconomic problems, at least during periods of relative stability. One example is GDP ‘nowcasts’ – high-frequency, short-term forecasts of GDP ahead of publication of the official data. Estimates derived using machine learning proved significantly more accurate than conventional statistical methods in pre-coronavirus years.

The pandemic recession put a dent in the performance of such forecasts, however. In doing so, it shone a spotlight on some of the limitations of machine learning in macroeconomics.

A fundamental problem is that the algorithms currently in use still cannot capture the underlying structure of economies, including the long-run relationships and second-round effects common in macroeconomics. This limits their use in scenario analysis – such as the generation of alternative outlooks for the global economy, based on the future evolution of the Covid-19 pandemic – and hence their usefulness to businesses in the development of appropriate contingency plans.

The pandemic has also highlighted the importance of human involvement. During the pandemic, we have turned to non-traditional indicators of economic activity, including mobility trends, restaurant bookings and measures of lockdown stringency. While such indicators are both informative about the current state of key sectors of the economy and more timely than traditional metrics, the limited data sets involved present a major challenge for machine learning and other statistical approaches.

The Oxford Economics approach is to combine our economists’ expectations for the very near term, based in part on interpretation of these alternative sources of data, with state-of-the-art macroeconomic modelling. The usefulness of such an approach is illustrated by back-testing analysis.

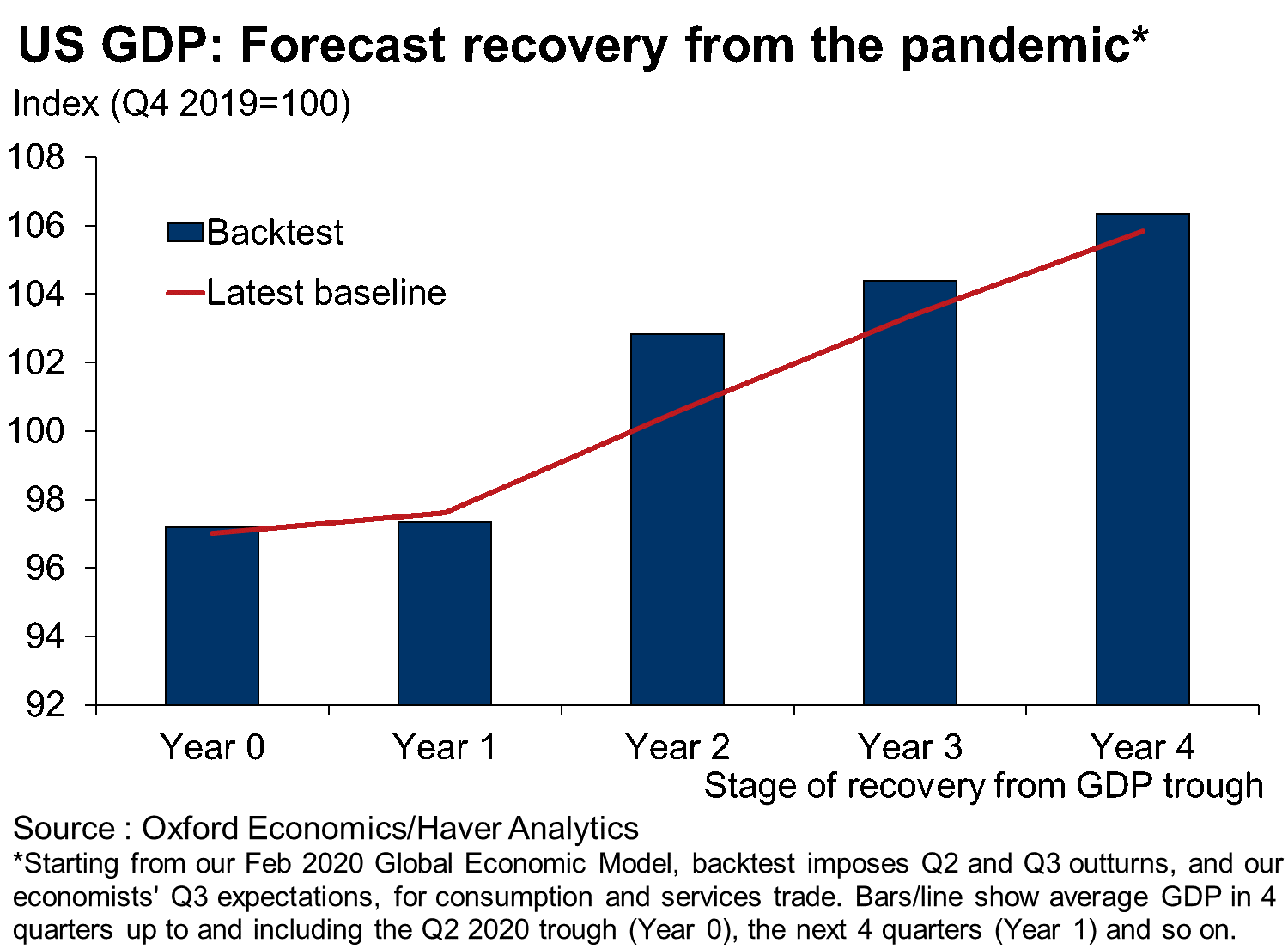

The starting point in the back-testing exercise was our Global Economic Model in February, during the early stages of the pandemic. We then imposed in our model the actual outturns in Q1 and Q2 2020 for consumption and services trade – two of the areas most directly affected by public health restrictions – and our economists’ expectations of the Q3 2020 outturns for these variables prior to their official release.

We found that the simulated recovery path (which doesn’t incorporate official outturns for the most recent quarter) broadly matches our very latest baseline forecast (which does incorporate the very latest official data releases). The similarities extend to the medium term, implying comparable levels of economic scarring from the pandemic.

We found that the simulated recovery path (which doesn’t incorporate official outturns for the most recent quarter) broadly matches our very latest baseline forecast (which does incorporate the very latest official data releases). The similarities extend to the medium term, implying comparable levels of economic scarring from the pandemic.

Overall, it is clear that machine learning has a role to play in enhancing short-term forecasting efforts. But experience during the pandemic confirms that machine learning should be viewed as a tool to be used in conjunction with the careful analysis of an experienced economist using a structural model. This informs the modelling approach that we employ today at Oxford Economics.

Tags: